We have to predict the class of the iris plant based on its attributes. The dataset contains three classes- Iris Setosa, Iris Versicolour, Iris Virginica with the following attributes. We will use the scikit-learn library to build the model and use the iris dataset which is already present in the scikit-learn library or we can download it from here. In regression tree, the value of target variable is to be predicted.ĭecision tree classification using Scikit-learn In classification tree, target variable is fixed. This algorithm can produce classification as well as regression tree. CART (Classification and Regression Tree) It can handle both continuous and missing attribute values. It uses information gain or gain ratio for selecting the best attribute. This algorithm is the modification of the ID3 algorithm. Information gain for each level of the tree is calculated recursively. This algorithm is used for selecting the splitting by calculating information gain.

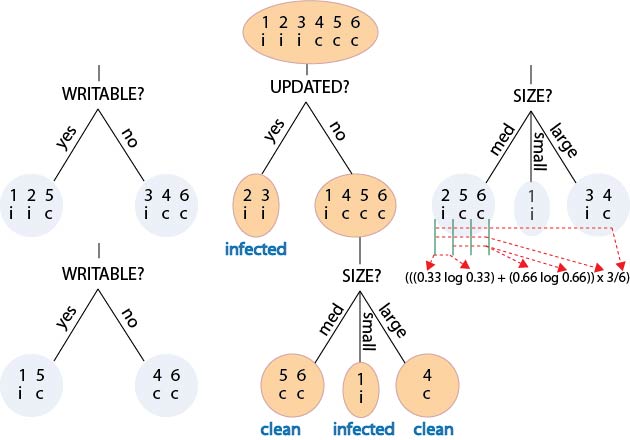

Let’s look at some of the decision trees in Python. Gini impurity is more computationally efficient than entropy. 1 means that it is a completely impure subset. It is hard to draw conclusions from the information when the entropy increases. It works for both continuous as well as categorical output variables. whether a coin flip comes up heads or tails). Entropy is the randomness in the information being processed. Decision-tree algorithm falls under the category of supervised learning algorithms. A decision tree is a flowchart-like structure in which each internal node represents a test on an attribute (e.g. To understand information gain, we must first be familiar with the concept of entropy. The most popular methods of selection are: It gives rank to each attribute and the best attribute is selected as splitting criterion. Begin with the entire dataset as the root node of the decision tree. The attribute selected is the root node feature.Īttribute selection measure is a technique used for the selecting best attribute for discrimination among tuples. The best attribute or feature is selected using the Attribute Selection Measure(ASM).

Decision Tree for predicting if a person is fit or unfit. The branches represent a part of entire decision and each leaf node holds the outcome of the decision. The tree starts from the root node where the most important attribute is placed.

It is called a decision tree as it starts from a root and then branches off to a number of decisions just like a tree. Decision Tree ID3 Algorithm Solved Numerical Example by Mahesh HuddarDecision Tree ID3 Algorithm Solved Example - 1. It is very easy to read and understand.ĭecision Trees are flowchart-like tree structures of all the possible solutions to a decision, based on certain conditions. The decision trees algorithm is used for regression as well as for classification problems. Both gini and entropy are measures of impurity of a node. So, let’s get started.ĭecision Trees are the easiest and most popularly used supervised machine learning algorithm for making a prediction. Decision tree algorithms use information gain to split a node. Hey! In this article, we will be focusing on the key concepts of decision trees in Python.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed